The Oscar OS: How a Unified Tech Stack Turns Cost into Care

The Core Problem: Fragmented IT Keeps U.S. Health Costs High

Legacy insurers manage dozens of vendor platforms—claims engines, provider directories, CRM tools—spliced together by nightly batch jobs. Every hand-off creates latency, duplication, and data blind spots that show up in premiums. Worse, each vendor upgrade forces a waterfall of regression testing, so innovation crawls while medical costs climb.

Oscar started from the opposite premise: put every transaction in one event stream and every business rule in one service mesh. If the system knows instantly that a claim posted, a lab result arrived, or a member searched “dermatologist in Dallas,” it can price, route, and engage in real time. That architecture—call it the Oscar OS—now processes billions of events a year, supports 1.8 million members, and underpins a medical-loss-ratio drop from 86 % (2023) to 75 % (Q1 2025).

Architecture Overview: One Spine, Many Services

Oscar’s platform runs on a cloud-native stack—Kubernetes clusters orchestrate micro-services; Kafka and CDC pipelines stream changes; PostgreSQL and Snowflake back hot and cold data. A single domain model represents members, providers, benefits, and claims. Because every service—eligibility, pricing, prior auth, engagement—talks to that model via internal APIs, no one waits for overnight ETL.

A service mesh enforces security, versioning, and (critically) policy as code. Plan benefits, network contracts, and regulatory constraints live as machine-readable rules that can be queried in milliseconds. When the policy or provider team changes a rule, the mesh propagates it across the stack without redeploying apps.

LLM-Driven Operations: From Claim Narration to A/B Generation

Sitting atop that data spine is an LLM layer—more than twenty GPT-4–powered services in production and another dozen in pilot:

Because all prompts, evaluation harnesses, and usage metrics feed a central library, teams avoid reinventing the wheel—and governance can track accuracy, bias, and PHI exposure in one dashboard.

Key AI Workflows and Their Impact

Claim Lifecycle Narration – Translates dense adjudication logs into plain-English explanations, sharply reducing provider call times and dispute cycles.

Electronic Lab Review Drafting – Auto-summarizes inbound lab panels for virtual primary-care providers, freeing clinicians to focus on medical judgment rather than paperwork.

Broker FAQ Assistant – Parses EOCs, SOBs, and regulatory PDFs to supply brokers with instant answers, cutting manual triage to near zero.

Eligibility Error Detector – Spots anomalies in exchange enrollment files before they contaminate downstream billing processes.

Automated A/B Variant Generation – Generates campaign copy variations on demand, enabling growth teams to test ten ideas in the time it once took to ship one.

Economic Levers: Turning Architecture into Margin

Real-time pricing – Faster signals mean actuarial teams reserve less buffer; each point of MLR shaved (~1 %) frees roughly $80 million annually at today’s premium base.

Self-configuring contracts – A pilot AI tool converts provider agreements into machine-readable payment rules, shrinking adjudication lag and cutting over-payments.

Automation > Head-count – SG&A slid to 15.8 % of revenue in Q1 2025 (19.1 % in 2024) largely because LLMs displaced manual claim reviews, note taking, and data entry.

Capex-light expansion – New states and ICHRA employer products ride the same core; cost of entry is regulatory filing, not brick-and-mortar build-out.

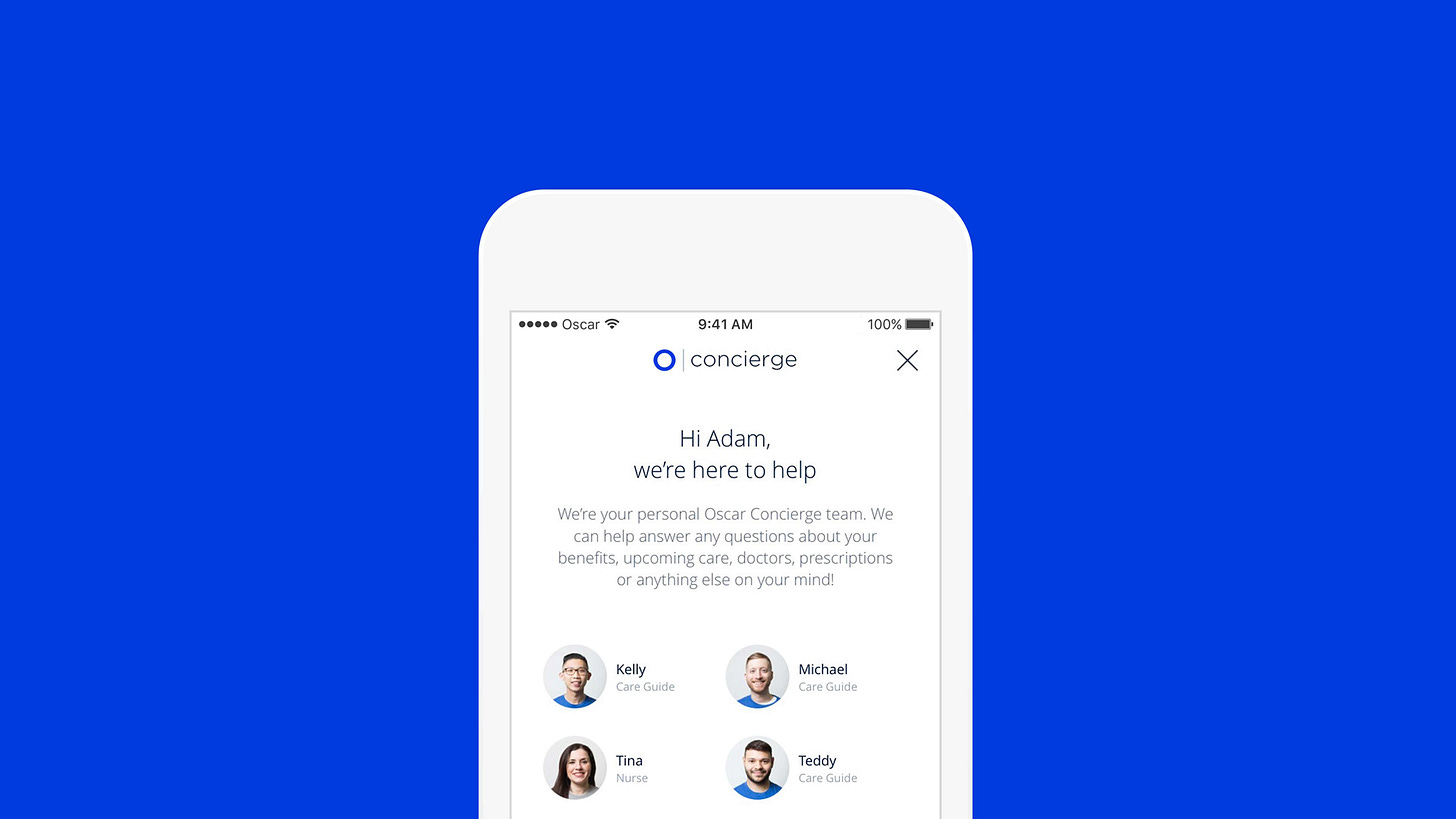

Experience Levers: A Consumer App, Not a Paper Chase

Natural-language provider discovery – Early prototypes map member-typed symptoms (“strange mole on arm”) to structured searches (“board-certified dermatologist within 10 miles, accepts Oscar Silver EPO”). Beta testers report 30 % faster appointment booking.

Contextual explainers – Hover-to-define modules demystify jargon (“deductible,” “formulary tier”) inside the app, reducing abandon rates during plan selection.

100 % interaction coverage – LLM rating of member calls fills the feedback gap left by low survey response, letting operations flag irritants before NPS slips.

The result: an NPS of 66 (industry peers cluster around single digits) and 44 % of members engaging digitally each month—a data flywheel that powers the next wave of personalization.

Investor Lens: Why the Tech Stack Matters to Valuation

Traditional managed-care stocks trade on price-to-earnings and membership growth; software companies trade on revenue multiples and expansion potential. Oscar straddles both: core premium income funds a durable margin, while +Oscar licensing and AI-driven cost curves offer a SaaS-like upside the market is only starting to price.

Key markers to watch:

MLR trajectory – Consistent sub-80 % performance proves the algorithms beat medical inflation.

Platform revenue disclosure – A breakout of +Oscar fees could unlock a valuation re-rate.

AI cost-savings cadence – Management cites 1,660 bps of operating-cost take-out in 2024; maintaining that pace would push operating margin into mid-teens ahead of peers.

Conclusion: An Insurance Platform That Acts Like an OS

Oscar’s bet is that healthcare’s real competitive frontier is not actuarial tables but software orchestration. By owning every layer—from event-stream data model to LLM prompt library—the company can translate insight into action in near real time, driving both lower cost and better member experience.

If it executes, the prize is twofold: structurally lower claims costs that fund price competitiveness, and software-type margins on platform revenue that traditional insurers can’t easily match. That dual engine makes the Oscar OS one of the more compelling—and under-appreciated—stories in healthcare tech today.